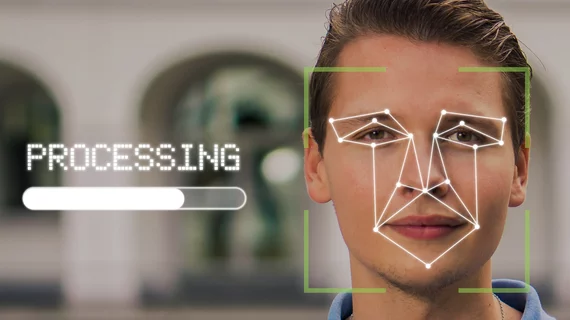

Only two-thirds of U.S. healthcare consumers are OK with surgeons using digital facial recognition to avoid medical error by confirming patient identity.

And less than half would greenlight the technology for researchers using diversified image data to advance precision medicine.

These are two key findings from a survey-based study published this month in Plos One.

Senior author Jennifer Wagner, JD, PhD, of Penn State and colleagues sent out and received back around 4,000 surveys.

The respondent pool comprised a representatively diverse mix of the population in terms of age, geographic region, gender, racial and ethnic background, educational attainment, household income and political views.

Half the field registered their attitudes on the general use of biometrics for scenarios in which they might find themselves asked to participate.

The other 2,000 or so were specifically asked to project their openness to the use of facial recognition technology in various healthcare use cases in clinical as well as research settings.

Along with the contextualized research circumstance above, the team found a majority of respondents unfavorably disposed toward facial recognition to monitor their symptoms or emotions.

“Additional research would be useful to understand what factors are contributing to the perspectives of those who expressed they were unsure about these two uses or expressed these two uses were unacceptable,” Wagner and co-authors comment in their discussion.

Other noteworthy findings:

- 55.5% of respondents indicated they were equally worried about the privacy of medical records, DNA and facial images collected for precision health research.

- 24.8% said they would prefer to opt out of the DNA component of a study.

- 22.0% reported they would prefer to opt out of both the DNA and facial imaging component of a study.

Among the research limitations the team acknowledges is a lack of scenarios offering alternatives to facial recognition, information on cost considerations or evidence of the technology’s effectiveness.

Katsanis et al. conclude:

Ethical, legal and social implications (ELSI) research is needed to understand how facial images and imaging data are being managed and used in practice and how familiar research professionals are with the privacy and cybersecurity aspects of their work. Additional ELSI research is also needed to examine whether and how a ‘HIPAA knowledge gap’ (i.e., the gap between what individuals think HIPAA allows or disallows and what HIPAA actually allows with their data) or limited data literacy (e.g., comprehension of concepts such as ‘de-identified data’) influences trust in healthcare organizations and professionals and support of or opposition to precision health initiatives, healthcare applications of facial recognition technology and policy approaches.”

In coverage of the research by the news operation at Ann & Robert H. Lurie Children’s Hospital of Chicago, lead author Sara Katsanis of that institution suggests healthcare providers as well as medical researchers need to work harder to win broad buy-in on emerging healthcare technologies.

“Our results show that a large segment of the public perceives a potential privacy threat when it comes to using facial image data in healthcare,” Katsanis says. “To ensure public trust, we need to consider greater protections for personal information in healthcare settings, whether it relates to medical records, DNA data or facial images.”

The study is available in full for free.